𝗩𝗣 (Vice President) Career Path Study: 𝗣𝗮𝗿𝘁 𝟮: What Did Tech VPs Study & what School did they graduate from? Last week in Post #1, we kicked off a series where we analyze ~100 LinkedIn… | Sina Pourghodrat (PhD)

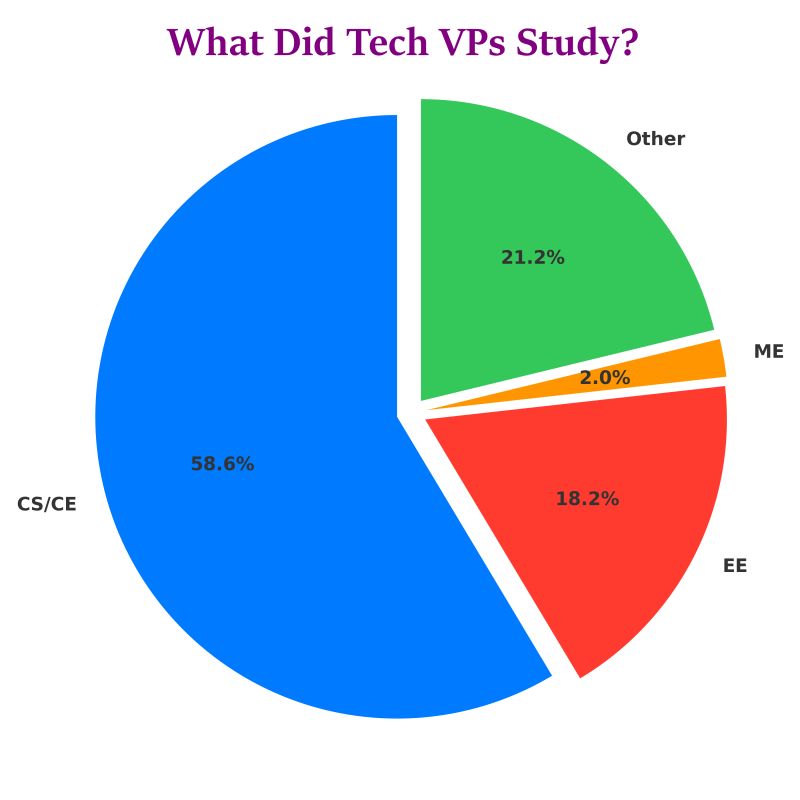

𝗩𝗣 (Vice President) Career Path Study: 𝗣𝗮𝗿𝘁 𝟮: What Did Tech VPs Study & what School did they graduate from? Last week in Post #1, we kicked off a series where we analyze ~100 LinkedIn profiles of VPs from top tech companies: 𝘈𝘱𝘱𝘭𝘦, 𝘕𝘝𝘐𝘋𝘐𝘈, 𝘎𝘰𝘰𝘨𝘭𝘦, 𝘈𝘮𝘢𝘻𝘰𝘯, 𝘔𝘪𝘤𝘳𝘰𝘴𝘰𝘧𝘵, 𝘔𝘦𝘵𝘢, 𝘛𝘦𝘴𝘭𝘢, 𝘐𝘯𝘵𝘦𝘭, 𝘢𝘯𝘥 𝘓𝘪𝘯𝘬𝘦𝘥𝘐𝘯. Our 𝗴𝗼𝗮𝗹 is simple: Understand who these leaders are, their background, education, and the paths they took. In Part 1 [https://lnkd.in/ePBjhaCT], we shared two surprising insights: - 86.3% of VPs do not have an MBA - Their highest degrees lean heavily toward a graduate degree (master’s & PhD) 🎓 𝗣𝗮𝗿𝘁 𝟮: 𝗪𝗵𝗮𝘁 𝗗𝗶𝗱 𝗧𝗵𝗲𝘀𝗲 𝗩𝗣𝘀 𝗦𝘁𝘂𝗱𝘆?

Based on our dataset, here are the top majors for tech VPs (grouped & normalized): - CS/CE (Computer Science / Computer Engineering) – largest share by far - EE (Electrical Engineering) - ME (Mechanical Engineering) 🏫 𝗪𝗵𝗲𝗿𝗲 𝗗𝗶𝗱 𝗧𝗵𝗲𝘆 𝗦𝘁𝘂𝗱𝘆? While Stanford and UC Berkeley are top U.S. universities they got their highest degree from, there is 𝗻𝗼 single “must-attend” university. Yes, top schools show up, but the majority come from a wide range of universities, including many you wouldn’t expect. 📌 From an 𝗲𝗱𝘂𝗰𝗮𝘁𝗶𝗼𝗻 perspective, our dataset suggests that MBAs are relatively uncommon among these VPs, and school names vary widely. The most frequent pattern we see is VPs holding graduate-level technical degrees, particularly in CS/CE, though this may not represent the entire industry ---> Follow along: more insights coming in this series. At the end of this series, we’ll release the full dataset

-------------------------------------------------------------------------------------

For readers interested in upskilling in AI, Udacity currently has a 55% discount on its online 𝗠𝗮𝘀𝘁𝗲𝗿’𝘀 𝗶𝗻 𝗔𝗜 using code BLACKFRIDAY55 [sponsored & affiliate link]: https://lnkd.in/eTMFKN72

----------------------------------------------------------------------------------------

Member discussion